This is a re-post of a very old blog that is only accessible via the way back machine now. In the first place this continues practice highlighting old blogs that Sandro started. I am often looking for this blog and it is very hard to find now. Furthermore, I still use this XSLT in my Azure logic App XML transformations to remove duplicates. Without further ado, here is a copy of the original blog by Maina Donaldson. See the the first post in this series here.

SELECT DISTINCT in a BizTalk Map

A recent POC presented me with the following problem: I receive a PurchaseOrder line item list from SAP, containing order header information in a flattened data structure. The SALES_ORDERS node actually contains line item records (complete with order header fields), which we needed to roll up into order-level records. This would be analagous to a SQL SELECT DISTINCT on the OrderNumber. The goal of this exercise was to find an efficient way to use the mapper tool, and not resort to custom xslt altogether.

Not that easy, as I found out, and the solutions presented are credited in large part to my Magenic colleague Doug Marsh. Thanks Doug!

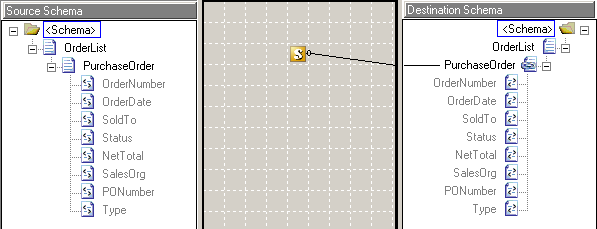

Solution 1: Source and destination schema are the same

A simple approach if the only task of the map is to SELECT DISTINCT, is to use inline XSLT with a copy-of select, to copy the entire node, and use a preceding-sibling selector to check if the same node is there already. The map simply looks like this:

And the inline xslt in the scriping functoid:

<xsl:for-each select="/*[local-name()='OrderList']/*[local-name()='PurchaseOrder'][not(OrderNumber = preceding-sibling::PurchaseOrder/OrderNumber)]">

<xsl:copy-of select="."/>

</xsl:for-each>Since there are not additional transformations on the PurchaseOrder node, this functoid in-line approach works well. Any additional transformations on the filtered node would have to be built using xslt, not the mapper.

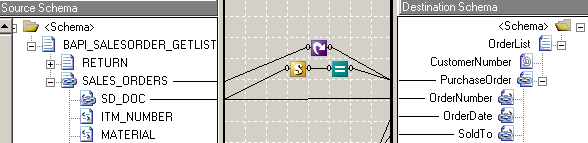

Solution 2: Source needs to be transformed to destination

This is the real-world scenario, with the SAP source schema and the canonical destination. SALES_ORDERS nodes needed to be manipulated extensively to arrive at the Order-level PurchaseOrder node. Inline xslt was not practical.

The looping functoid is to show explicitly the relationship between SALES_ORDERS and PurchaseOrder nodes. It is optional.

The logic is, again, in a scripting functoid, this time with inline c# script. The script creates a global generic list to keep the Unique Order ID in and compares the list content with the current node content on each loop.

public System.Collections.Generic.List<int> duplicateList = new System.Collections.Generic.List<int>;

public bool IsDuplicate( int OrderNumber )

{

if( duplicateList.Contains( OrderNumber ) )

return true;

duplicateList.Add( OrderNumber );

return false;

}

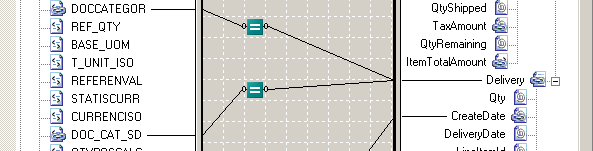

If the script functoid returns false (new order number), which is checked by the Logical Equal functoid following it, it will become an output record. This is achieved by connecting the Logical Equal output directly to the destination NODE, effectively filtering the input node-list.

This type of construct can be used to filter any map output, for example here:

Both Logical Equals check for specific values and end in the node-to-be-filtered, yielding something analagous to “SELECT * WHERE DOCCATEGOR = ‘J’ AND ‘DOC_CAT_SD = ‘C'”

Obviously, there are a few other ways to address filtering, most notably through subscriptions. But if it has to be done within a map, these have proven to work well for me.